Designing the AI Startup Factory

Like any average startup founder, I have too many ideas and too few hands to implement them. Everyone shouts "FOCUS," "FOCUS," but the twenty ideas in my to-do list aren't listening.

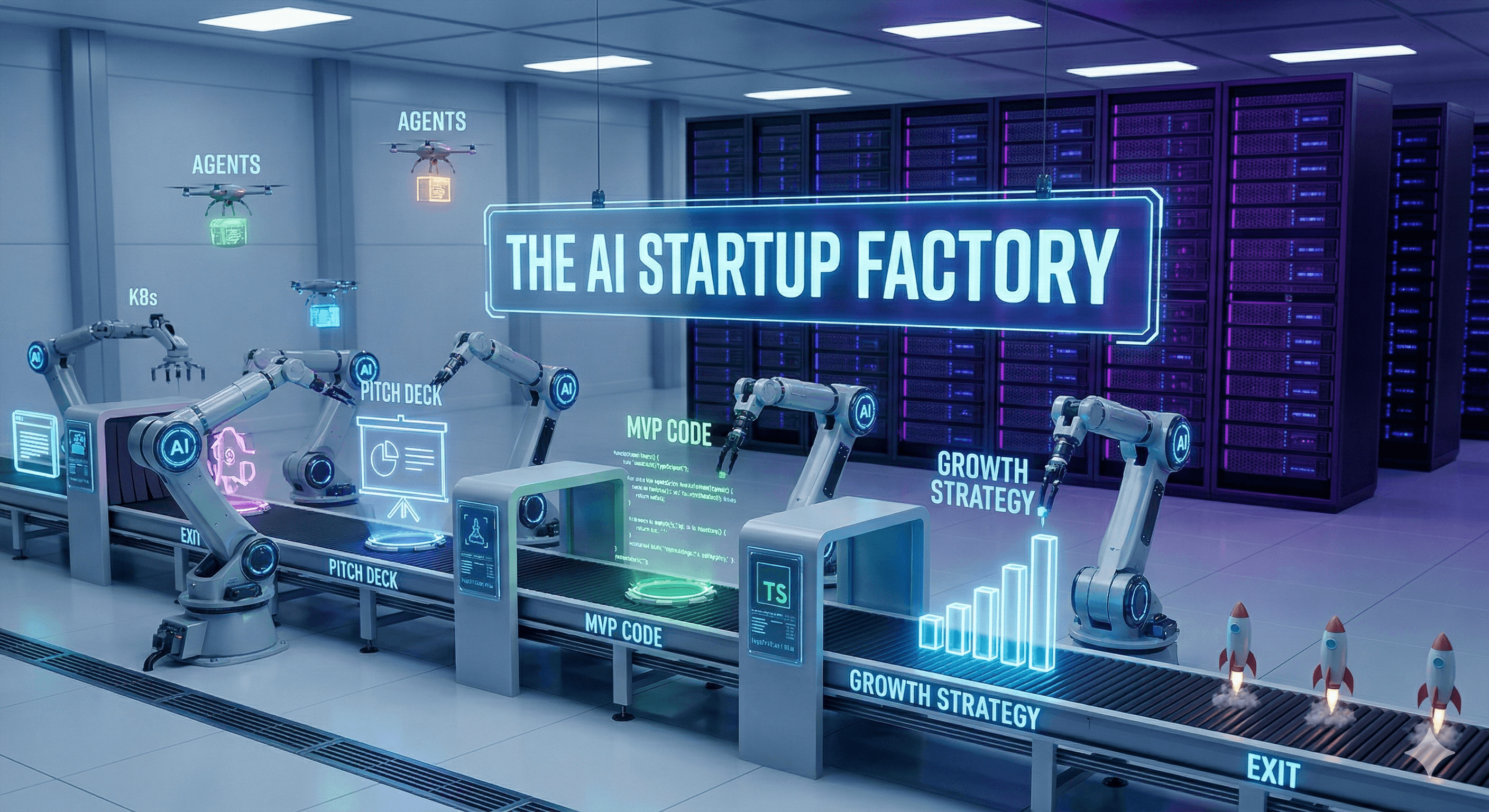

For years, I had to choose, sacrificing one idea for another. But now those times are coming to an end. I am building an AI Startup Factory, and this article outlines what it looks like.

This is an ongoing project currently in active development. Many things described here already exist and are working, but many are just ideas yet to be implemented.

Philosophy

Before writing any code, I want to establish some ground rules. These are non-negotiable principles that the framework will follow.

Replicability

Many modern AI agency frameworks are developed with a local-first mindset, where agents run on local machines. I don't think this approach is scalable. From day one of working with AI agents, I have run them in the cloud.

Every change to the deployment or agent configuration is persisted to Git. This decision has tradeoffs. Every change requires the additional overhead of saving changes to Git and updating Docker images.

The benefits are significant: I don't have to worry about configurations being destroyed overnight. I can take my agents and scale them to dozens or even hundreds in a matter of minutes.

Startups will follow the same patterns. The bus factor is essentially nonexistent. Any startup made with this factory can be replicated in minutes if you have access to the agents, workflows, and configuration files.

An important limitation introduced as part of this philosophy is that everything used in the framework and in the startups must be definable as code (IaC). Design files, email sequences, workflows, domains, campaigns—everything.

Independence

In contrast to most agentic frameworks that promote a "security-first" approach, I have the advantage of running all agents in a secure cloud environment. This allows me to prioritize independence over restrictive security measures.

By default, all my agents have full permissions and don't have to ask me for anything. They are designed to be proactive. Instead of thinking of them as my assistants, I have made them think of me as their assistant.

For a startup, a day without work being done can be the difference between success and being late to the party.

The independence aspect also incorporates the fact that I don't have to support my agentic system. The agents are self-improving and have access to all the tools they need for self-reflection and technical self-service.

Expert-Driven (MoE)

Instead of relying on a single agent or an army of hundreds of agents, I choose the path of balance. Inspired by the real world, the more people join a team, the harder it becomes to manage them all.

Having a single agent would overload its context and cause it to mess up projects. It would need to take care of all roles, which would require a complex layer of prompt orchestration on top of a single agent. That is a problem I want to avoid.

On the other hand, having hundreds of agents would make it hard to ensure context quality. Every startup might end up with different tech stacks, different configurations, and no shared infrastructure. That is another problem I want to avoid.

Having a few experts covering core roles like CEO, CTO, and CMO is sufficient. They will have the ability to spawn sub-agents, but those are small, disposable processes managed 100% by an expert.

The experts themselves are independent and self-improving. When a single agent improves, it benefits all startups in the ecosystem and also saves tokens.

Fail-Tolerance

The startup factory works like a distributed system. There is a layer between agents that makes asynchronous communication possible and effective. Tasks run in parallel, and results are evaluated later. Failures don't block progress and have a chance for retry.

The design of the startup factory is greatly inspired by event-driven architecture, famously known in software engineering.

The agent application itself expects agents to fail. When an agent is working on something, its progress is persisted. So when something unexpected happens and the server retries, the agent knows what it was working on and can pick up where it left off without losing any tokens.

System Design

The easiest architectural decision was the programming language for the agent harness. For the past four years, I have primarily used TypeScript for everything. There are millions of libraries, SDKs, and frameworks—both agentic and otherwise. Our entire infrastructure will be written in TypeScript, including all UI, backend, architecture, and communication. The bigger question was: how do we deploy this?

I am somewhat of an old-school developer who likes just putting things into a VPC, connecting a database and queue, and designing code around failure points. However, after thorough investigation, I found something better—something I had never tried but that looks like magic: Temporal.

Temporal gives you event sourcing out of the box and allows your code execution to be recovered at any point in time. This reduces code complexity and makes the system highly observable and reliable. As the database for Temporal, I decided to keep it simple and chose PostgreSQL, which is very familiar to me.

Initially, I thought of running the entire agent thinking loop inside Temporal. This would make agents extremely durable and token loss due to errors would barely exist. However, after careful consideration, I decided to use a mixed approach with Temporal for orchestration and LangGraph for sub-agent loops.

LangGraph is a library from the LangChain project that allows you to build infinite agent loops while having control over execution. It is done by defining a graph with nodes and edges. This allows us to have more reliable loops that can be designed separately for each of our experts.

As one of our core philosophies, we want to have a mixture of experts model, so we need the ability for agents to freely communicate with each other. It would be a waste not to use a structured approach for this, like the A2A protocol. I have never tried it, but it looks standardized and promising.

All of this will be running inside a K8s cluster hosted on my $50 VPS on Hetzner. K8s felt like an obvious choice due to the nature of Temporal's scalability requirements.

As a cherry on top, we need to decide which model our agents will use. As an experienced architect, I would love to postpone this decision and keep the system as flexible as possible. So, using OpenRouter feels like the most reasonable solution.

As part of the replicability rule, all architecture required for project deployment is set up as code (IaC). I am a huge fan of Terraform, but I also love TypeScript. So, Pulumi is used, which allows setting up the entire startup factory in one click on a service of choice.

A normal person would deploy all of this to something like AWS. But that would make the framework less replicable because not everyone is ready to use AWS. So I have chosen a pure VPC with K8s (k3s) + Traefik and cert-manager.

Global Tools Repository

There needs to be a stack of tools that agents can use. Some tools will be used only by specific experts, but some need to exist in every agent. Here is the list of global tools every agent has access to:

1. Version Control (GitHub)

2. Secrets Management (Bitwarden)

3. File System Access (Linux)

4. Web Access (Chrome)

5. Long-Term Memory (Mem0)

6. Coordination Layer (PostgreSQL)

7. Communication Gateway (Discord)

8. Observability Layer (Prometheus, Grafana, PostHog)

9. Voice Capabilities (ElevenLabs)

10. Image and Video Generation (Fal.ai)

11. Scraping Infrastructure (Apify)

12. Email + Cloud (Google CLI)

Global Skills Repository

Google Ecosystem: https://github.com/googleworkspace/cli

• https://skills.sh/anthropics/skills/skill-creator

• https://skills.sh/vercel-labs/skills/find-skills

• https://skills.sh/vercel-labs/agent-browser/agent-browser

• https://skills.sh/charon-fan/agent-playbook/self-improving-agent

Harness Design

While designing the harness, I tried to keep the balance between giving agents complete autonomy and implementing an effective self-improvement cycle.

The problem with most existing self-improving agents is that there is no precise way to determine if the agent actually improves. This is because there is no clear metric to optimize. Agents like OpenClaw are designed to be general and fit all needs. So the design of the incentive system lies on the shoulders of users.

With the Startup Factory, we have a clear niche, which allows us to design an effective self-improvement loop by establishing correct incentives.

An important restriction of the harness design is that experts MUST NOT do any work themselves. They need to use sub-agents defined inside the startup repo to do the actual work. Experts are replaceable. Their memory exists today and may not exist tomorrow. Sub-agents also have memory, which is tied specifically to the project.

This restriction helps us have a more self-sustainable startup that can be operated by anyone. It also gives us a clearer execution loop for agents as they play the role of orchestrators rather than employees. That is actually what executives are supposed to do.

Self-Improvement Loop

Real self-improvement isn't about an agent installing skills it needs. It is about structured experimentation. For any experimentation to work, we need to be able to evaluate its results.

The startup lifecycle gives us a unique opportunity to evaluate the quality of a startup team. Having just one team working on an idea would be straightforward. Yet in this case, we wouldn't have a way to determine if the idea is bad or if the team is having issues.

The self-improvement loop of the startup factory is inspired by how Git works—specifically, Git forks. Imagine every startup as a Git repository containing the complete system required to run this startup and take it to the next stage. Imagine making two forks of the same company at the MVP stage and applying two different strategies to build an MVP.

Now imagine evaluating each fork and merging the winning version into the core repository, repeating this process until the very last stage. The losing fork gets "learned from" and discarded, avoiding a merging nightmare.

On every stage, there will be a winning and losing startup team. Not only does the startup get the stronger version, but the startup team itself evolves with every startup evaluation across all projects the startup factory has.

Core Workflows

Every expert follows the same meta-loop:

Universal Expert Loop:

1. Listen (events / triggers)

2. Decide (prioritize + plan)

3. Delegate (spawn sub-agents)

4. Validate (check outputs)

5. Persist (commit to repo)

6. Reflect (self-improve)

They NEVER:

• Do work themselves

• Hold long-term memory

• Bypass artifacts

Another core workflow agents have is "dreaming." Every night, each expert wakes up and starts reorganizing its long-term memory.

Persistent Storage

I differentiate five types of artifacts that require storage:

1. Secrets

2. Sources

3. Files

4. Memory

5. Working items

As much as I wanted to put everything into Git, it would be impossible. So there are a few different places where things are stored.

Secrets are API keys, configurations, passwords, etc. They are stored only in Bitwarden and environment variables. That is the thing that changes the most during a fork. Application design must allow for basically having a whole separate startup if secrets are changing.

Sources are large files such as videos, images, etc. They are stored on Google Drive. All of them are referenced with a path across the system, so the purpose of Google Drive is just storage of those files. Making storage replaceable is under consideration (NextCloud). Sources don't get forked and just grow together for all projects for potential reuse.

Files are what get forked and used most of the time. Files are stored in GitHub. They are forked and very carefully updated for constant agent or startup improvement.

Memory is stored in the Mem0 database and accumulated globally, regardless of project or fork. It creates a constant improvement loop for agents and projects.

Working items such as TODOs, leads, research, messages, posts, etc., are stored in a special PostgreSQL database with a schema designed specifically for the startup factory. Working items don't get forked and are usually needed only at runtime, so there is no need to persist them for long or reuse them.

Teaching AI how to use all these tools is crucial.

CEO

A big architectural decision stands right here. Is the CEO an ultimate operator or a replaceable part of a startup team? Normally, you would need some kind of AI to run experiments. However, we are working under the assumption that AI isn't deterministic. Meaning, if we take two exact replicas of a CEO and make them validate the startup, we will have two different result sets.

With this in mind, we can freely let the CEO be part of the startup team and rely on a pure algorithm to run the experimentation engine for us.

Tools

1. Legal Management (DocuSeal)

2. Financial Infrastructure (Stripe)

Skills

• https://skills.sh/anthropics/skills/pptx

• https://skills.sh/anthropics/skills/xlsx

Workflows

1. Weekly Sprint Planning

2. Weekly Retrospective

3. Daily Board Report

4. Every 60 minutes: Agent task progress check-in

5. When gateway message is ready

CTO

Tools

1. Deployment Capability (Coolify)

2. Cloud Coding Environment (VS Code)

3. Design Base (Stitch)

Skills

Stitch Skills: https://github.com/google-labs-code/stitch-skills

Superpowers: https://github.com/obra/superpowers

• https://skills.sh/anthropics/skills/frontend-design

• https://skills.sh/nextlevelbuilder/ui-ux-pro-max-skill/ui-ux-pro-max

• https://skills.sh/shadcn/ui/shadcn

• https://skills.sh/vercel-labs/next-skills/next-best-practices

• https://skills.sh/jeffallan/claude-skills/architecture-designer

• https://skills.sh/currents-dev/playwright-best-practices-skill/playwright-best-practices

• https://skills.sh/supercent-io/skills-template/security-best-practices

• https://skills.sh/ajmcclary/coolify-manager/coolify-manager

Workflows

1. Task Execution Pipeline

2. Architecture Evolution

3. Bug Resolution Loop

CMO

Tools

1. Social Media Scheduling (Postbridge)

2. Advertisement Capabilities (Google Ads, Meta Ads, TikTok Ads)

3. SEO / Web Analytics (Google Analytics)

4. Video & Image Generation (Fal.ai)

Skills

Marketing skills repo (30+ skills): https://github.com/coreyhaines31/marketingskills

Fal.ai skills repo: https://github.com/fal-ai-community/skills

• https://skills.sh/remotion-dev/skills/remotion-best-practices

Workflows

1. Content Engine (Daily Growth Loop)

2. SEO + Organic Growth Loop (Weekly)

Agent Workspace Design

With agent workspace design, I didn't want to reinvent the wheel and got inspired by OpenClaw. The agent workspace is OpenClaw-compatible and startup-agnostic. Meaning it only defines how the agent should behave and doesn't give any context about the startup it is working on.

This is intentional to allow the agent workspace to evolve independently from the startup workspace and from the startup factory framework itself.

There will be a requirement to launch many different agents steered specifically to projects or positions. For this reason, I am initiating an open-source project that could help make agent steering more reliable. Announcements are yet to be made.

Startup Lifecycle

While designing this system, I took on the challenge of giving it creative freedom while having guardrails to guide it. I don't want it to be just a static workflow because that won't be effective. But I also don't want it to be a messy agentic experience where the model does whatever it imagines without structure, because that won't be replicable.

I have been an active part of the startup world for the past five years. I have had hundreds of ideas, built a dozen of them, and sold two. This gives me enough experience to actually accomplish this goal. So I give my agents a framework that gamifies startup development.

I see startups as my collection of Pokémon. Pokémon can evolve from being tiny, useless creatures to gigantic legendaries that can carry whole generations of new Pokémon. The Pokémon universe does not define exactly what each Pokémon needs to do to evolve, yet it defines the required preconditions.

In the same way, I mapped out eight stages of startup development. Each has requirements for moving to the next stage. It gives tips on what has to be done during this stage yet leaves creative freedom on how to do it.

The biggest challenge I faced was the process of moving from one stage to another. How can the same agent that works on the idea also evaluate its stage of development? This feels like a conflict of interest—the same kind of conflict you get when the person teaching you also evaluates your knowledge.

The solution was elegant. Instead of asking a single agent, we ask 100. One hundred unique agents with unique personalities relevant to the stage will decide if a startup is ready to evolve to the next stage. It is called swarm intelligence. A common example of this is MiroFish. I am not yet sure if I will use MiroFish or design my own swarm intelligence, but that is definitely going to be the evolution judge.

Each startup has three chances to evolve. After each attempt, swarm intelligence provides a detailed feedback document that the startup team must fix in order to pass the next time.

After three unsuccessful attempts, the idea is considered closed. And this isn't bad. I consciously chose not to punish agents for failed ideas. This would make them overly positive. I actually do the opposite: I reward them for failing ideas.

We will talk about incentive design and harness in future sections. For now, let's dive into the startup lifecycle.

Stage 1: Idea

You have a concept. You think people have this problem. Now you're testing whether it's real.

Artifacts

1. Detailed research on 10 closest competitors

2. 50 real potential buyers complaining about the problem or asking for a solution

3. Technical feasibility assessment

4. Go-to-market strategy

5. Pitch deck

Stage 2: Validation

The idea is finalized, but you don't yet know if people will like your solution.

Artifacts

1. User stories

2. Product design document

3. Waitlist of 100 people

4. 10 influencers with more than 5K followers ready to promote the product

Stage 3: MVP

You know there is a place in the market for your idea. You know exactly how the final solution will look, and you even have people interested.

Artifacts

1. Technical architecture

2. Publicly accessible MVP

3. Feedback from 20 beta testers

Stage 4: Launch

You have the product working and delivering real value that people are ready to pay for.

Artifacts

1. Completed launch checklist

2. Social media accounts with a total of 1,000+ followers

3. Ads with positive ROI

4. 10 paid clients

Stage 5: Distribution

The product is validated and generates revenue.

Artifacts

1. $5K MRR with >20% margin

Stage 6: Product-Market Fit (PMF)

Your product is validated, has real users, and starts to grow on its own.

Artifacts

1. 100+ mentions of your brand across the internet (not from you)

2. 10% of customers refer at least 1 friend per month

3. $20K MRR with >50% margin

4. Public rating of >4.5 stars with 100+ reviewers

Stage 7: Support

You are a well-known brand solving a problem people care about. You listen to what clients say and iterate to grow the business to maximum capacity.

Artifacts

1. (If applicable) Client retention higher than industry average

2. Public rating of >4.5 stars with 1,000+ reviewers

3. $100K MRR with >80% margin

Stage 8: Exit

You grew the startup to a point where it can be handed over to a buyer who would be ready to pay a decent amount for it.

Artifacts

1. Unit economics

2. Financial forecast

3. At least 5 interested buyers

Closing

You failed one of the stages and decided that skipping this idea would only save you time and money.

Artifacts

1. Startup retrospective

2. Public-facing report about the startup progress and decision

Startup Workspace Design

The core philosophy states that everything needs to be replicable, including the startups themselves. Why deal with 30 different services for storing data when (almost) everything can just be stored in Git? The only exceptions are API keys and assets like videos, images, voices, etc.

The exact structure of the repo will vary from startup to startup, but the core folders and files are defined as part of the framework.

The most important rule is that the startup workspace must be logically separated from the startup factory framework itself. All skills, MCPs, and configurations defined here are made specifically for this project, not for the startup factory.

This makes the repo structure universal in a way where the startup factory framework can evolve independently from the startup workspace framework. Anyone could clone this repo and run a business even without using the agents.

Here is how the common structure of a startup workspace looks:

1. .<startup-id>

Plugin defining all required AI infrastructure. Follows the Claude convention (https://code.claude.com/docs/en/plugins\#plugin-structure-overview):

• plugin.json → Basic metadata for the plugin

• skills/ → Skills related specifically to this project

• agents/ → Core sub-agents required to operate the startup

• hooks/ → Hooks required for agents working on this startup

• .mcp.json → MCP servers required to operate the startup

• .lsp.json → LSP servers for technologies used in the startup

• bin/ → Executable files automatically added to PATH

2. docs/

• business_stages/ → Folders with artifacts for every stage of the startup (managed by CEO)

• operations/ → Operations required to run the startup (managed by CEO)

• technology/ → Documentation related to the technology part of the startup (managed by CTO)

• growth/ → Documentation related to growth (marketing, sales, retention) (managed by CMO)

3. internals/

Folder with internal tooling used for marketing, deployment, etc.:

• infrastructure/ → IaC that spins up infrastructure and deploys everything in one click (Pulumi preferred, Coolify preferred)

• content-studio/ → Remotion repository with workflows for content creation

4. apps/

Client-facing services including backend, frontend, landing pages, etc.

5. packages/

Libraries shared between different apps:

• configurations/ → Global configurations for the startup (name, logo, colors, etc.). The core library required for simple replicability of the same startup with different names and other configs. All internals and apps must commit to using it instead of hardcoding values.

• components/ → UI components described with Storybook used on the frontend, landing, and content studio. Using clean components and Storybook makes content studio 10x more powerful.

• schemas/ → Interfaces and classes shared between applications

• utils/ → Utility functions that can be shared between different applications

6. docker/

Folder with Dockerfiles describing how to build each of the apps and internals.

7. docker-compose.yml

File that launches all apps, internals, and MCPs with a single command.

8. .env.requirements

Secrets required to operate this startup.

The startup factory is framework-agnostic, yet it was designed by a person who writes everything in TypeScript, so the structure is highly inspired by how TypeScript monorepos are usually structured. All startups will also be made with TypeScript to simplify deployment and support.